There's a certain satisfaction that comes from running your own personal server infrastructure. It's a small digital kingdom where you are the sole architect, a place of ultimate control and endless tinkering. For years, my humble cloud server has been the reliable heart of my personal projects, hosting everything from utility services to my Magic: The Gathering cube analyzer.

But kingdoms, even digital ones, can get crowded.

As I built and deployed more applications, each on its own subdomain, I started to see the warning signs. Memory usage was steadily climbing. The server, once a spacious home, was starting to feel like a tiny studio apartment with too much furniture. I was approaching a classic engineering crossroads: the dreaded resource bottleneck.

The Crossroads: To Scale Up or To Scale Out?

When your server starts to sweat, the industry playbook presents two standard options.

The Vertical Path: More Money, Less Fun The first option is to scale vertically. This is the brute-force solution: you open your cloud provider's dashboard, slide a lever, and pay more money for a bigger, more powerful instance. It's simple, effective, and completely soulless. It solves the problem by throwing money at it, a solution that offers zero intellectual satisfaction. Where's the challenge in that?

The Horizontal Path: Maybe Overkill The second option is to scale horizontally. This is the "real" engineer's answer for large-scale systems. You spin up multiple servers and put a load balancer in front of them to distribute traffic. It's a robust, resilient pattern, and for a personal project with minimal traffic, it's like hiring a full symphony orchestra to play "Happy Birthday." It's elegant, but the complexity and cost are wildly out of proportion to the problem.

I rejected both paths. Both options felt... uninspired. They were correct, sensible, corporate solutions. But this is my personal lab, a place for experimentation and ingenuity. Surely, there had to be a more creative path.

My eyes fell upon a Raspberry Pi 5 sitting on a shelf in my closet. I kid you not it was sitting right there. The answer, staring back at me. This device is a powerhouse in miniature, with a multi-core ARM processor and gigabytes of RAM, nowadays doing little more than gathering dust. Meanwhile, my high-availability cloud server was gasping for memory. The juxtaposition was too perfect to ignore.

And then, a third, slightly unhinged idea began to form. What if I could make them work together?

Digging Secret Tunnels

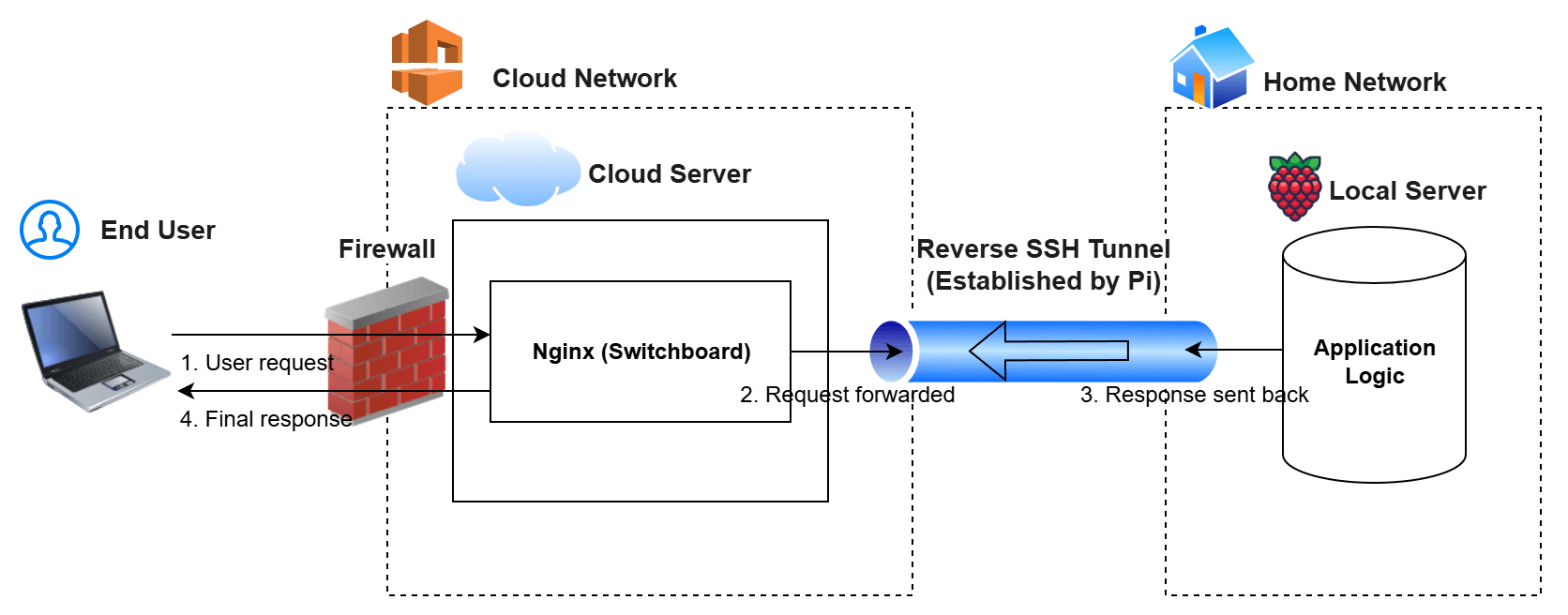

The challenge was obvious: how do you get a public cloud server to safely delegate tasks to a private machine sitting behind a home router, without exposing your home network to the entire internet? The answer, it turns out, is to think in reverse.

You don't have the cloud server call the Pi. You have the Pi call the cloud server.

This is the magic of a Reverse SSH Tunnel. It's a beautifully elegant networking pattern that creates a persistent, secure, outbound-only connection.

The flow is ingenious:

- When the Raspberry Pi boots up, I can have it automatically initiate a secure SSH connection out to my public cloud server. It essentially tells the server, "Hey, I'm here and ready for work. Here's a secret, secure hotline you can use to talk back to me."

- The cloud server's firewall doesn't need to allow any incoming connections to my home. The connection is established from the inside out, making it inherently secure. This dramatically reduces the attack surface on my home network, as there are no open ports for malicious actors on the internet to find or exploit.

- My public server can now act as a smart "switchboard operator." It receives all public traffic as usual, but its Nginx configuration has a special rule: "If a request comes in for a resource-intensive task, don't handle it yourself. Instead, forward it down the secret hotline to the Pi."

- The Pi receives the request, does the heavy lifting with its ample RAM and CPU, and sends the result back up the tunnel.

From the perspective of the end-user, the entire process is seamless and transparent. They only ever talk to the public, high-availability cloud server. They are completely unaware that their request took a quick, secure detour to my closet.

Making Sure Water is Flowing Through the Tunnel

Making this a reality required orchestrating a few key components:

autossh: A utility that wraps the standard SSH tunnel command and ensures it is persistent. If the connection ever drops due to a network blip,autosshautomatically re-establishes it.systemd: The standard Linux service manager. I wrote a simple service file on the Pi that ensures theautosshtunnel starts automatically on boot and is kept alive.- Nginx (The Switchboard): The Nginx instance on the public server was configured to proxy requests for specific subdomains to the local end of the tunnel. The Nginx instance on the Pi was configured to receive requests from the tunnel and route them to the correct local application.

The result is a true hybrid-cloud system. My critical, low-latency, low-memory services (like authentication) run on the highly available cloud instance. The memory-hungry, non-critical workloads (like file processing or image analysis) are seamlessly offloaded to the Pi.

Takeaways

What I built was a system that is more resilient, more cost-effective, and infinitely more interesting than simply upgrading an instance type. I'd quantify it as a challenge not just of coding, but of architecture, networking, and security.

The real joy wasn't just in solving the immediate problem, but in creating a system that can now grow in more interesting ways. This isn't the end of the story; it's the start of a new chapter, and my metrics dashboard is already giving me ideas for the sequel.